AI is everywhere in 2026, and so are articles about AI tools. But most of them read the same: a numbered list, a feature table, and the same ten tools recycled from last year. This one is different. I have been building with these tools daily, and I want to share which ones have genuinely changed how I work, not just which ones have the best marketing.

Whether you are a solo developer shipping fast or part of a small team trying to cut down on manual overhead, here are the AI tools that are actually worth your time in 2026.

The Coding Assistants Worth Paying For

The AI coding assistant market has matured. Two years ago, the question was “should I use one?” In 2026, the question is “which one fits how I work?”

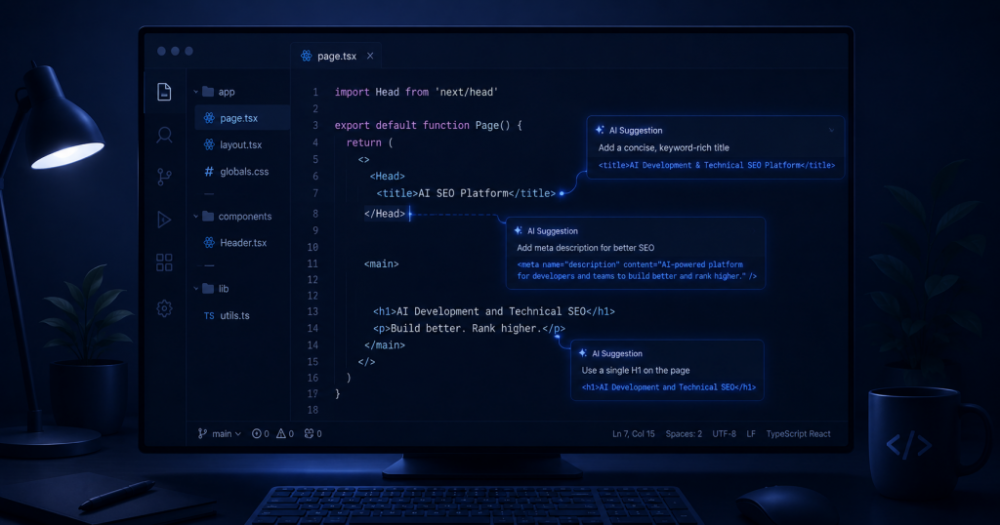

Cursor is the one most serious developers have settled on. It is a VS Code fork built entirely around AI, which means the AI is not a plugin bolted onto an existing editor. It understands your entire codebase, not just the file you have open. Ask it to refactor a function across five files and it does it. Ask it why something is broken and it gives you an answer that actually makes sense. The tab completion is fast. The chat is context-aware. At $20 a month, it has replaced GitHub Copilot for most developers who have tried both.

GitHub Copilot is still the default for teams that want reliability and tight GitHub integration. If you are working in a larger codebase with multiple people, Copilot’s consistency across team members is worth something. It is not as deep as Cursor, but it is predictable and it rarely gets in your way.

Claude Code is worth knowing about if you do heavy refactoring work. It runs from the terminal, not an IDE, and it handles large, complex changes across many files better than any browser-based tool. It is not something you use every day. It is something you use when the task is genuinely hard.

Code Review That Does Not Wait for a Senior Engineer

Code review is where development time disappears. A pull request sits for two days. The reviewer finally has time, leaves 12 comments. You fix them. They review again. The cycle repeats. Meanwhile, the feature is stuck.

CodeRabbit fixes this by leaving an automated first-pass review on every pull request the moment it is opened. It summarises what changed, flags potential bugs, catches obvious issues, and does it in under two minutes. Human reviewers come in after the obvious stuff has already been addressed. PR cycle times drop significantly.

This matters more than it sounds. Every day a feature sits in review is a day it is not in front of users. For small teams especially, AI code review is one of the highest-leverage changes you can make to your workflow.

Testing Tools That Remove the Excuse

Nobody likes writing tests. Most developers write them because they have to, not because they want to. AI testing tools do not make testing enjoyable, but they do remove the “I don’t have time” excuse.

CodiumAI (now Qodo) generates unit tests for existing code. You point it at a function, it writes tests covering the main cases and a reasonable set of edge cases. The tests are not always perfect. They are usually good enough to catch regressions, which is the actual goal. Spending 20 minutes reviewing and adjusting AI-generated tests beats spending 90 minutes writing them from scratch.

Deployment Without the Configuration Tax

This one gets overlooked in most AI tools roundups because deployment is not as visible as writing code. But it is where a lot of developer time disappears, especially for solo developers and small teams.

The traditional deployment workflow involves Dockerfiles, environment configs, CI/CD pipelines, dyno sizing decisions, and enough YAML to lose an afternoon in. AI-powered deployment platforms are changing this in the same way AI coding assistants changed writing code: by handling the setup automatically so you can focus on the actual work.

Platforms that use agentic AI to read your project, detect your stack, and deploy it without any configuration files are now mature enough to use for real production workloads. The pattern is consistent across frameworks: connect your GitHub repo, add environment variables, click deploy. The AI handles the rest, including subsequent deploys triggered by every git push.

For developers who want to understand how agentic AI deployment actually works under the hood, here is a detailed breakdown that covers the mechanics clearly.

The One Shift That Ties All of This Together

The pattern across every tool in this list is the same: AI handles the parts of the job that are necessary but do not require your judgment, so you can spend your time on the parts that do.

Writing boilerplate code does not require your judgment. A first-pass code review does not require your judgment. Writing the fifth unit test for a function does not require your judgment. Deciding what to build, how to architect it, and whether it is actually solving the right problem absolutely does.

The developers who are shipping fastest in 2026 are not the ones using the most AI tools. They are the ones who have figured out where AI removes friction and where human judgment still matters, and have built their workflow around that distinction.

The list above is a starting point. Which tools actually fit depends on how you work. But if you are still doing all of this by hand, you are spending cognitive budget on tasks that are no longer yours to do.